Atoms + Intelligence

Contours of Physical AI

Hi, I am Rhishi Pethe. I am an industrial systems engineer and product manager. I have spent many years working in transportation and logistics, digital supply chains, and automation and robotics in the food, beverage, and agriculture sectors. I have had stints at multiple startups, Amazon, and Google X.

This newsletter is about Automation and Physical AI.

But what the hell is physical AI?

Travis K’s company, Atom, just came out of stealth. (Travis K was the founder and ex-CEO of Uber). The mission of Atoms is simple.

Physical automation to transform industry and move the world.

Automation and Physical AI have to do with atoms powered by AI in industrial, commercial, and consumer applications.

Today’s post will help ground some key frameworks of physical AI. Over the next few months, I will dig deep into each of these aspects of Physical AI.

Physical AI

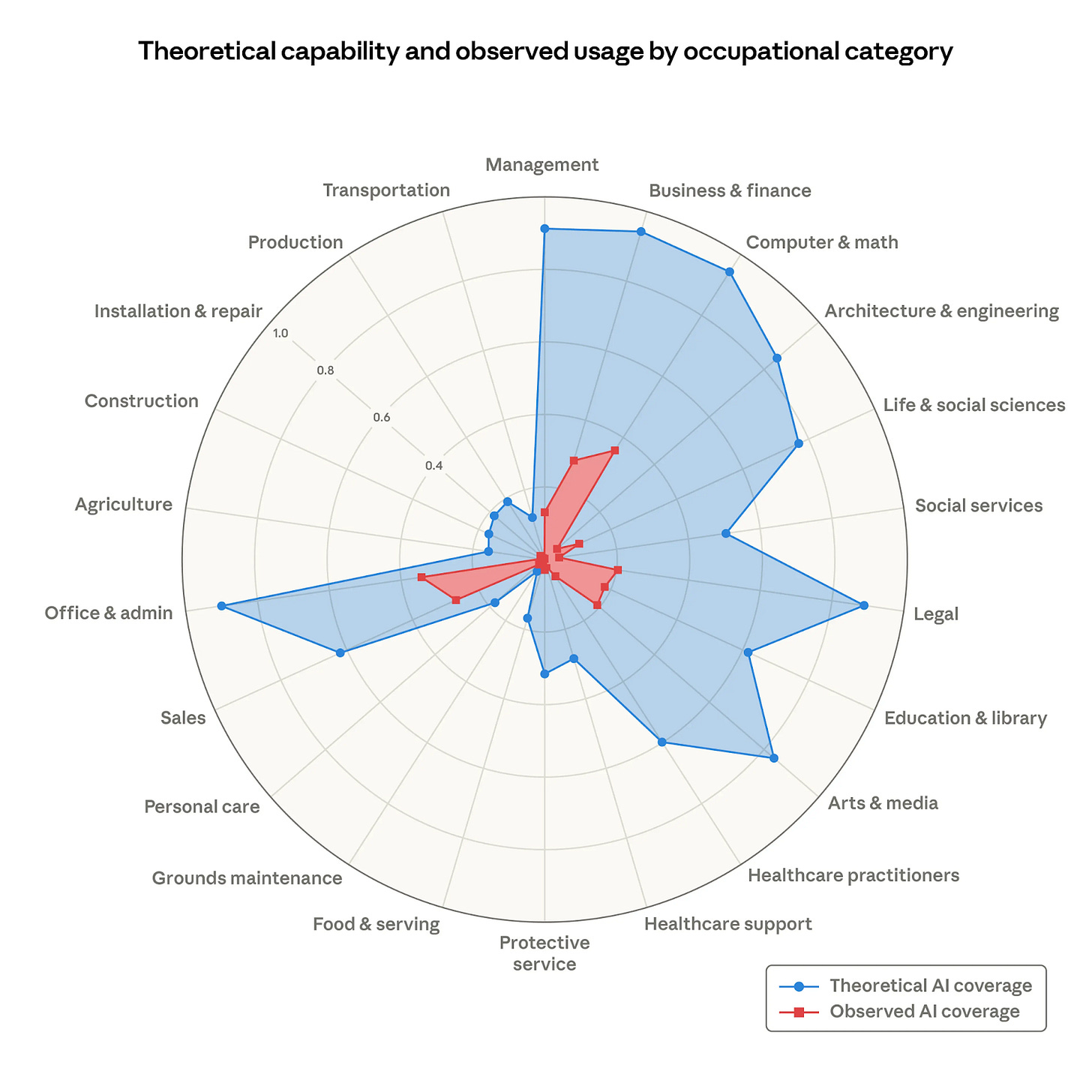

Two weeks ago, Anthropic published a spider chart of “Theoretical capability and observed usage by occupational category” for LLMs. The chart immediately went viral.

The chart highlights a few key points. The current LLMs and other forms of AI are very good at (and are expected to be) solving problems that require a software-only approach. For example, computer and math, legal analysis, business, and finance are software-heavy and data-heavy fields.

These are typically what one would call “desk jobs” or jobs that can be done with the help of a computer. They involve limited direct interaction with the physical world. Obviously, you can design a building on a computer, but the actual construction of the building is a physical activity.

You can do a lot of theoretical teaching of various subjects through a computer, but you still need labs to run actual physical experiments (for example, a biology experiment).

Physical AI is what happens when you give a machine a body and tell it to figure out the real world.

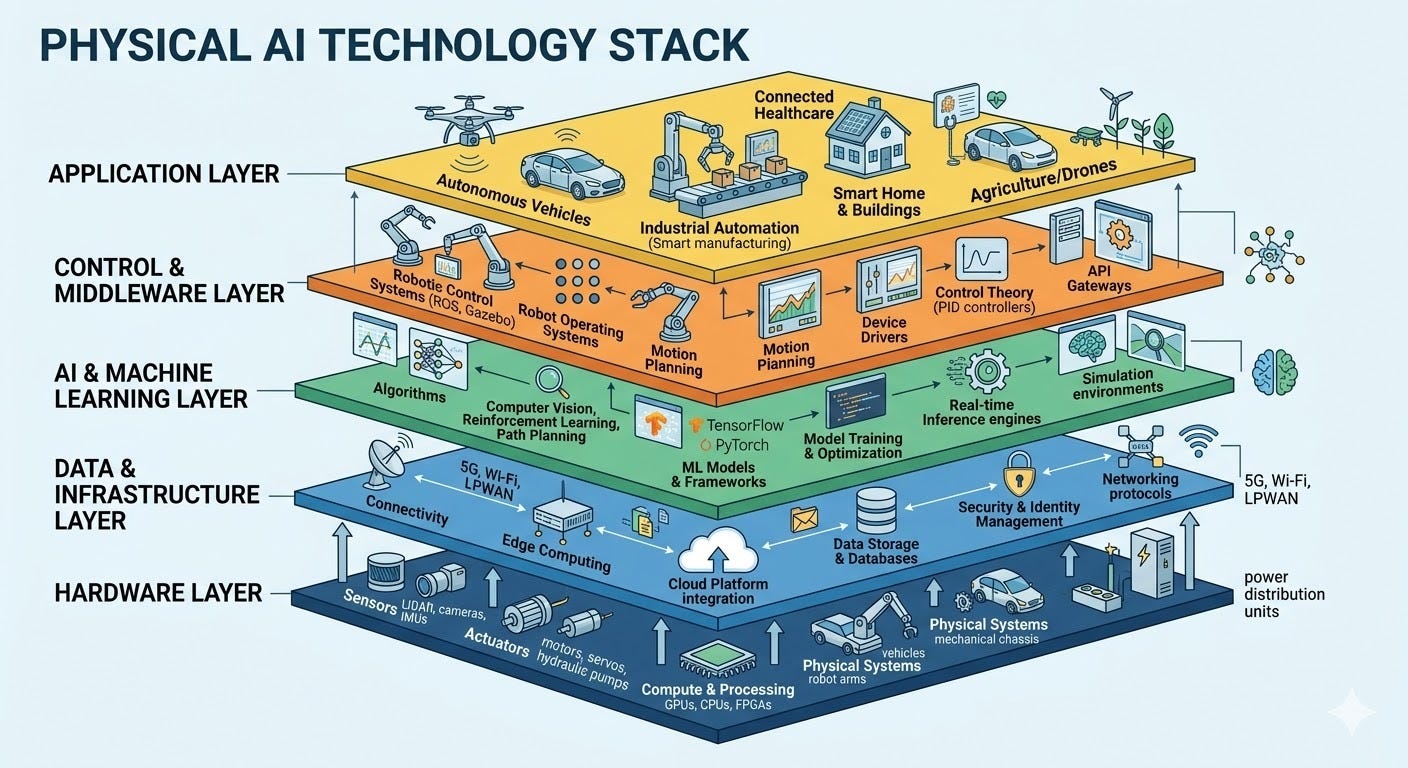

Physical AI has to sense what’s happening around it using sensors, figure out what the hell is going on using edge compute, and decide on a course of action, and then actually execute the action IRL, while continuously taking in feedback from what it sees and what it does and how effective it is at what it does.

If a standard AI hallucinates, the danger is in acting on a bad output. If a physical system messes up, it could damage property, injure someone, or break things.

When I worked on Physical AI systems in agriculture, we were building a unit with cameras and compute that could be mounted on a chemical sprayer. As the equipment moved through the field, the cameras took images, and the compute unit analyzed the images to identify the presence of weeds.

If a weed was present, the compute unit would send a signal to activate the nozzle spraying mechanism to squirt some chemical on the weed and nowhere else. All of this had to happen while the sprayer was moving at 15 miles an hour through a bumpy field.

It was challenging to gather enough data to train the model to work across different soil conditions, weather, and lighting conditions, as well as different crops, etc. We used different methods, including simulation-to-real transfers and data fusion. It was a huge effort to get the Physical AI system to work efficiently while generating economic surplus for the customer and the ecosystem.

Obviously, safety and regulation are big challenges for Physical AI systems like autonomous vehicles. If a self-driving car runs over a kid in front of a school, that is a bad outcome all around.

Physical AI also changes which jobs are still valuable, which parts of an existing job are useful and which are not, and which new jobs we need. Truck drivers might have to switch to being network operators or maintenance engineers if their trucks are autonomous now.

Robots picking your packets

When I was studying Toyota Manufacturing Systems in my undergraduate program, our professors would get almost orgasmic pleasure when talking about preventive and scheduled maintenance and would show us charts showing how it improved uptime and quality.

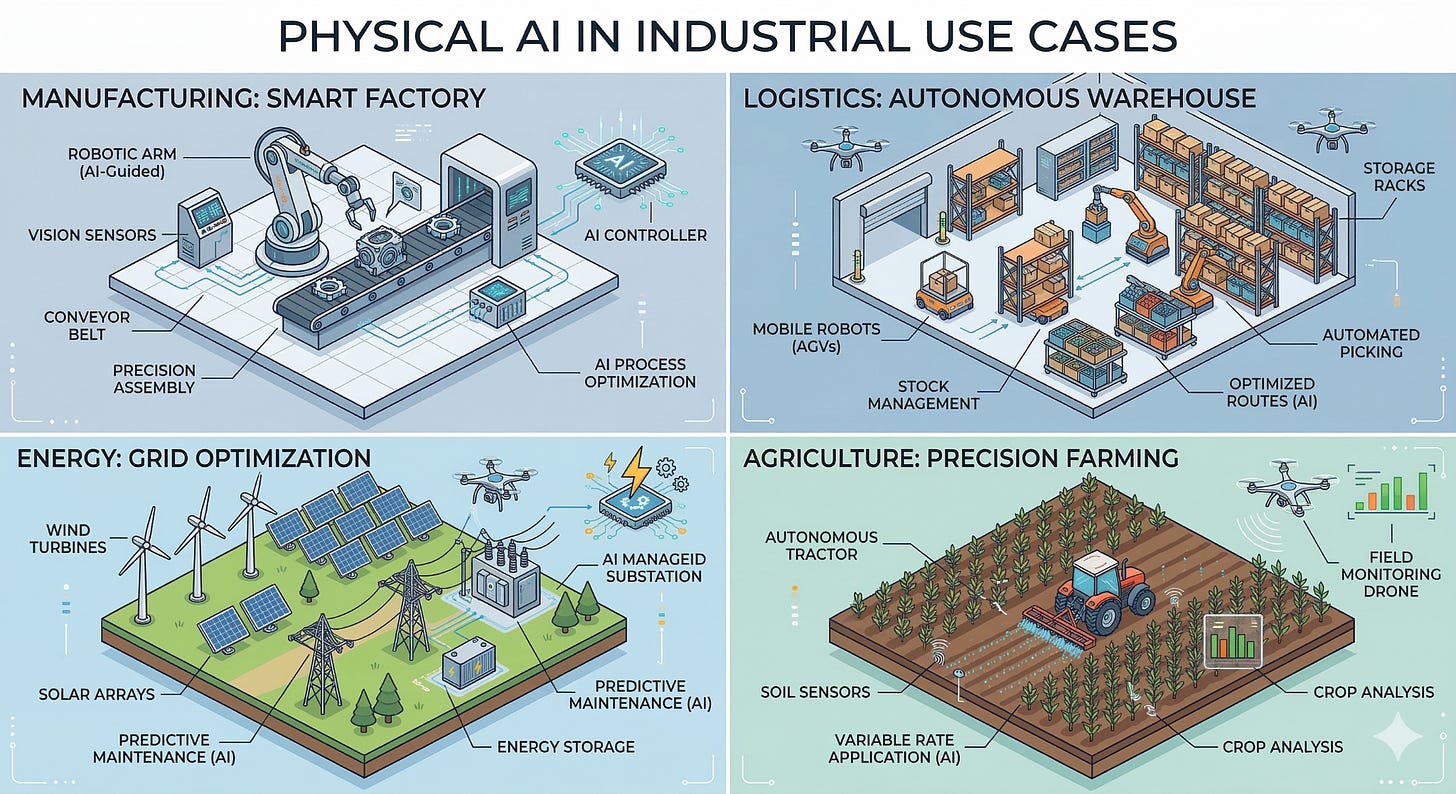

With sensors, machines, and robots can now self-diagnose, alert maintenance staff, and schedule their own maintenance before they croak out due to a severe issue. This is a classic example of physical AI in a manufacturing environment.

For my graduate thesis, I built a system that would shine a laser on a newly manufactured gear, capture an image with a camera system, and then create a profile of the gear’s surface. It was an early computer vision system.

The digital profile would then be compared with the master design for that gear to understand any deviation from the design spec. The system sampled gears and, if it detected a deviation from spec in the manufactured unit, would alert the operator to determine the cause.

It was a faster feedback mechanism vs. waiting on other quality processes downstream. After running additional experiments and understanding the manufacturing process, we could potentially infer what was causing the variance in manufacturing quality relative to the design spec. (The signal was quite poor, or our understanding was poor)

Now imagine a manufacturing system that not only detects when something is going wrong but also fixes potential problems based on feedback. This is the promise and, in some cases, the reality of physical AI as of today.

Physical AI enables adaptive quality control, predictive maintenance, and collaborative robots (also known as cobots, which sounds more like a nut from California to me, rather than a robot). Cobots work safely alongside humans and adapt their speed, location, and force based on how the human is working near them.

Anytime you visit a warehouse or a manufacturing floor, you have to put on your hard hats and walk along pre-designated lanes marked with yellow lines. This is to reduce the risk of you being run over by a forklift or some other piece of equipment.

The use of autonomous mobile robots (AMRs), which actively navigate chaotic warehouse floors, dodge forklifts, and fetch inventory, has become quite commonplace in many advanced warehouses.

Automated Storage and Retrieval (AS/AR) systems have been around for a long time, but they have become more advanced, with robotic arms equipped with tactile sensors for picking a wide variety of items.

Autonomous drones and sidewalk delivery bots, which navigate neighborhoods, recharge automatically, and deliver goods across a wide variety of environments, have the potential to transform last-mile delivery.

Service robots can also be seen in airport clubs and a very, very small number of restaurants.

Much ink has been spilled over autonomous vehicles and self-driving cars, so I won’t spend any time in this introductory post about physical AI. Cities like Los Angeles and Chengdu have incorporated physical AI to make traffic flows more efficient, though LA traffic might be too much for even AI to handle!

The architecture of AI-integrated traffic systems is typically structured into four layers: sensing, networking, processing, and control. The sensing layer includes devices such as LIDAR, inductive loops, and environmental sensors that capture real-time data. The networking layer ensures rapid and secure transmission of this data to the processing layer, where edge and cloud computing platforms run AI models for traffic forecasting, optimization, and anomaly detection. Finally, the control layer executes the decisions through traffic signals, digital signage, and route guidance systems.

Physical AI is well-suited to rugged and dangerous environments, such as construction and mining. Autonomous heavy machines like bulldozers, excavators, and dump trucks can grade land and haul ore without a driver in the cab.

Autonomous drones, which can do inspections in construction zones, oil platforms, deep mines, or large cold storage facilities, take on strenuous and dangerous human activities.

I visited a pet food manufacturing plant, and I have to admit, I had never seen so much frozen meat in my life before! It was daunting to walk through the facility to understand their operations. You were in freezing conditions, and it was a tough job for people who worked inside the facility.

On the other end of the spectrum, surgical robots are becoming more common, especially for procedures in urology (e.g., kidney surgeries), gynecology (e.g., hysterectomies), and general surgeries (e.g., hernia repairs and colorectal resections).

22% of surgeries in the United States are now robot-assisted. (University of California, San Francisco data)

In a robot-assisted surgery, the surgeon controls an instrument virtually through a very sophisticated computer-assisted interface several feet away from the patient. It leads to more precise and safer outcomes for patients.

Bionics and smart prosthetics are extremely useful for decoding neural signals and adapting to a user’s walking gait or grip intent in real time. When I was at Google X, I saw multiple demonstrations of exoskeletons used to assist people in daily activities, such as climbing, to improve their mobility.

I have also written extensively about my experience with automation, and AI-powered precision weeding systems, autonomous tractors, and precision harvesting robots in my other newsletter, Software is Feeding the World. It is very early days, as the chart from Anthropic at the top of this issue shows, but the need for automation is acute in specific situations, such as specialty crops.

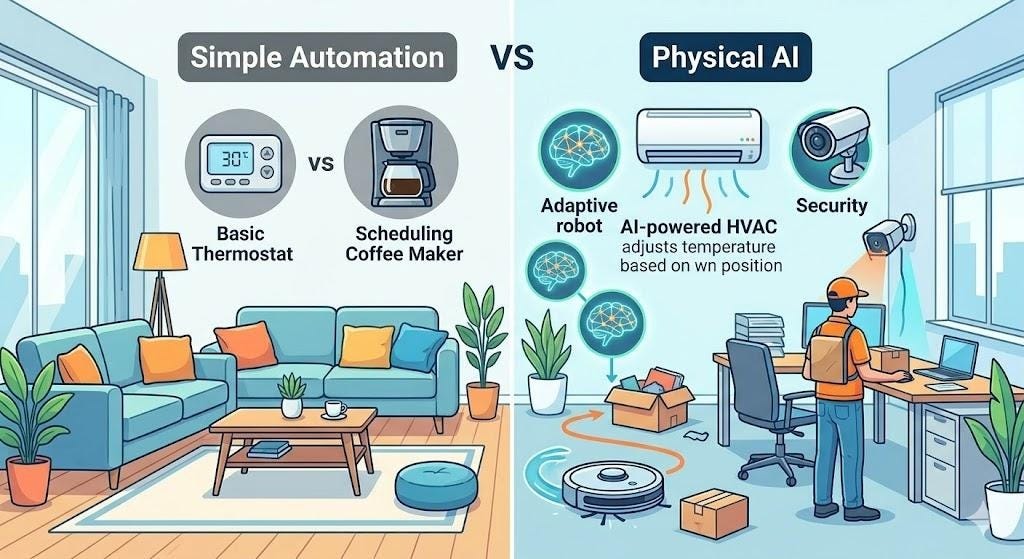

On the consumer side, physical AI and automation are slowly but surely making their way into our homes. Smart vacuums, which do not suck up your phone charger or gobble up a sock, are now commercially available (though they are at a high price point for mass adoption).

What do the funding models look like?

So, how does a startup get started in this space? What kinds of VCs fund Physical AI startups?

Last week, Anastasia Gamick wrote a nice write-up on how to fund companies whose economics do not fit into neat existing capital structures.

Typical VC funds have 10-year lifespans and need power-law returns. The industry tolerates ~75% failure rates because a small number of investments return 20x or more. For diagnostics, some manufacturing, and many hardware businesses, the upside is capped at 3–5x and the math for venture-style portfolio returns doesn’t pencil.

To summarize - Too slow for venture. Too early for PE or growth equity. Too capital-intensive for bootstrapping. Too profitable for charity. These companies sit in a structural gap between capital categories, and so they simply don’t get built.

When your product is an adaptive warehouse robot, scaling means you need to build 5,000 physical robots. You need to buy steel, copper, batteries, and sensors for each one. The capital requirement is massive. The “unit economics” matter infinitely more than for software.

Most early-stage founders go with deep-tech accelerators and government grants. In the US, Physical AI is increasingly viewed as critical to national security, and so Advanced Innovation Challenges or US defense grants can provide millions in early capital. Once a prototype shows promise, Physical AI requires venture capital. These check sizes are much larger than those of purely software companies, as capital is required for dataset building, purchasing compute resources, and manufacturing.

In industries such as agriculture, funding can include corporate venture arms (for example, NVIDIA’s NVentures) that provide capital for learning or strategic purposes. As companies move towards commercialization, giving up equity to buy inputs to scale manufacturing is not the best approach.

Companies often take on venture debt or revenue-based financing to enable manufacturing at scale and pair it with the right business model for them.

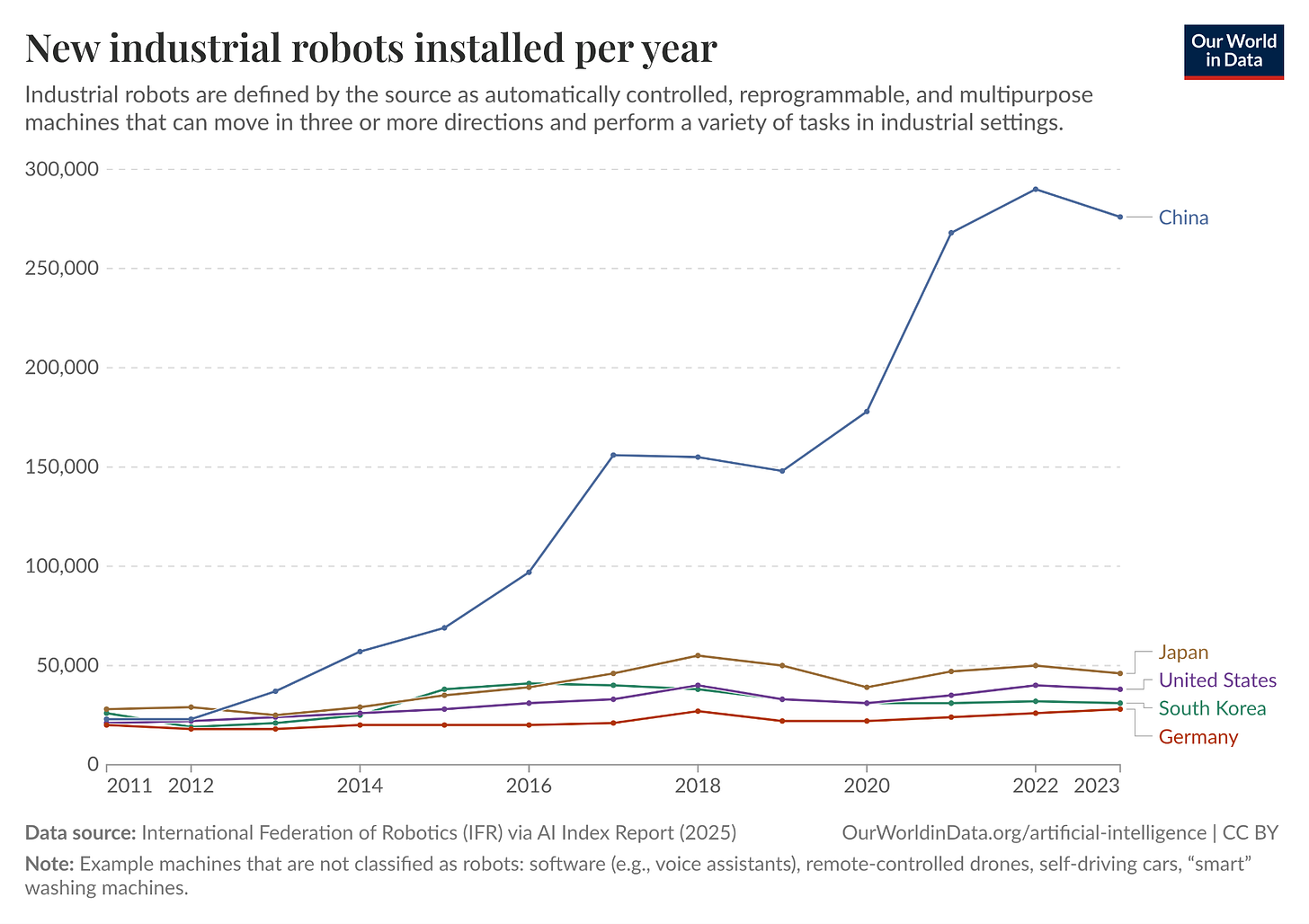

Industrial robots are being installed at a fast clip, with China leading the way. Waymo now has more than 25% of the app-based traffic in San Francisco. Ring cameras and bells that can identify a dog coming and going through your front door are fairly common. (The next step is to figure out what to do with that signal)

The adoption of physical AI is going to accelerate over the coming years and decades as AI capabilities become more powerful, populations in certain parts of the world age, and population growth slows.

Jacquard’s Loom will cover all the areas mentioned in today’s edition, including various business models, product and business strategies used by companies in the space, what’s happening in the ecosystem, and sometimes the implications of automation and physical AI for the economy and society.

Excellent as always. AI is more than LLMs; AI will increasingly mediate relationships with the physical world. Also check out what Skydio drones are doing.